Building a reliable, high-performing data infrastructure is no longer an optional upgrade for modern businesses; it is an absolute necessity. However, as the digital landscape expands, the sheer volume of data engineering tools entering the market has become overwhelming. From containerization and workflow orchestration to data warehousing and real-time streaming, selecting the right software stack is a critical decision that can either propel your analytics forward or trap you in operational bottlenecks.

Many organizations still rely on fragmented legacy systems or manual data extraction methods. This inevitably leads to messy, siloed data, making it nearly impossible for business intelligence teams and machine learning models to extract valuable, actionable insights. To truly leverage your data, you need an integrated pipeline built with purpose-driven technology.

Whether you are a lean startup looking for cost-effective agility or a massive enterprise handling petabytes of information, choosing the right components for your tech stack is crucial. In this comprehensive guide, we will break down the top data engineering tools by category, exploring their features, benefits, and real-world applications to help you make an informed decision for your business.

Soft CTA: Overwhelmed by building your data stack? Let the experts at XCEEDBD.COM design and implement a custom data engineering pipeline tailored to your exact needs. Reach out today for a discovery call!

What is Data Engineering and Why is it Critical?

Data engineering is the invisible engine that powers modern businesses. It is the meticulous process of designing, building, and maintaining the complex infrastructure that allows data to flow seamlessly from its raw origin to its final destination. Without a robust data engineering foundation, even the most sophisticated artificial intelligence models and business intelligence dashboards are essentially useless.

Think of data engineering as the plumbing and electrical wiring of a modern skyscraper. It might not be the aesthetic feature that stakeholders immediately notice, but it is the absolute prerequisite for the entire building to function. By establishing efficient data pipelines, data engineering ensures that massive volumes of information are collected safely, transformed accurately, and stored securely.

A well-architected data engineering pipeline provides several critical advantages:

- Democratized Access: It breaks down data silos, making clean, structured information accessible across various departments, from marketing to finance.

- Unwavering Quality: It ensures data consistency and accuracy, filtering out anomalies before they corrupt downstream analytics.

- Infinite Scalability: It allows your technical infrastructure to seamlessly grow and adapt as your data volume surges from megabytes to petabytes.

Top Containerization Tools for Data Engineering

Containerization has revolutionized how software and data pipelines are deployed. By packaging applications with all their necessary dependencies, these tools ensure that code runs identically regardless of the environment.

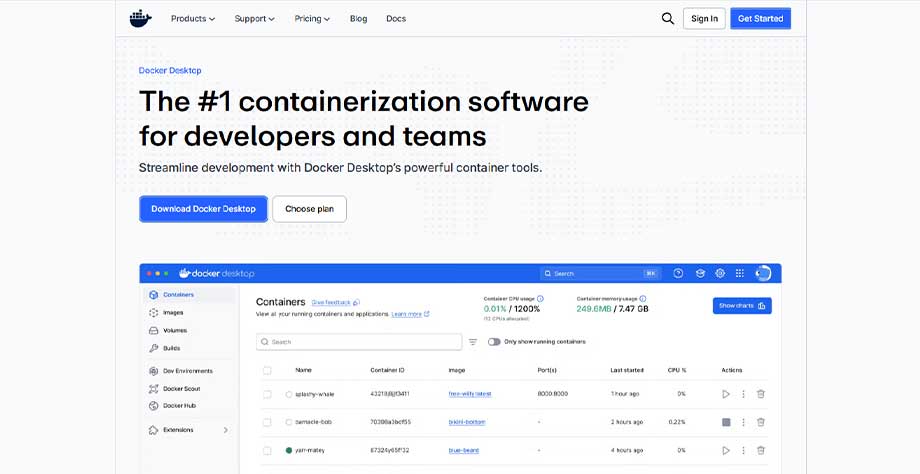

1. Docker

Docker has fundamentally changed how data engineering teams package, share, and deploy applications. By isolating software in standardized units called containers, Docker ensures that your data pipelines run with absolute consistency. It eliminates the infamous “it works on my machine” syndrome, allowing developers to create highly predictable environments.

For data pipelines that rely on a multitude of specific software dependencies, Docker provides a lightweight, agile execution space. It accelerates deployment cycles, simplifies version control, and drastically reduces the time spent on environmental troubleshooting.

- Key Features: Lightweight image creation, robust version control, seamless CI/CD integration, and exceptional portability across platforms.

- Best For: Startups and enterprises aiming to standardize their microservices architecture and data processing environments.

- Real-World Application: Packaging a complex Python-based ETL extraction script so it can be reliably triggered via a cloud scheduler without any package conflict issues.

2. Kubernetes

While Docker creates the containers, Kubernetes is the master conductor that manages them. Originally developed by Google, Kubernetes automates the deployment, scaling, and operational management of containerized applications across clusters of hosts.

When your data engineering infrastructure grows beyond a few simple scripts into hundreds of interacting microservices, Kubernetes steps in to maintain order. It monitors container health, dynamically scales resources based on traffic demands, and handles load balancing.

- Key Features: Automated rollouts and rollbacks, self-healing capabilities, horizontal scaling, and built-in load balancing.

- Best For: Fast-growing startups and large enterprises running highly complex, distributed data applications at scale.

- Real-World Application: Automatically spinning up additional container nodes to handle a massive, unexpected surge in Black Friday transaction data, and scaling them down when traffic subsides.

Infrastructure as Code (IaC) Solutions

Infrastructure as Code allows data engineering teams to provision and manage cloud infrastructure using clean, readable code rather than manual, error-prone console clicks.

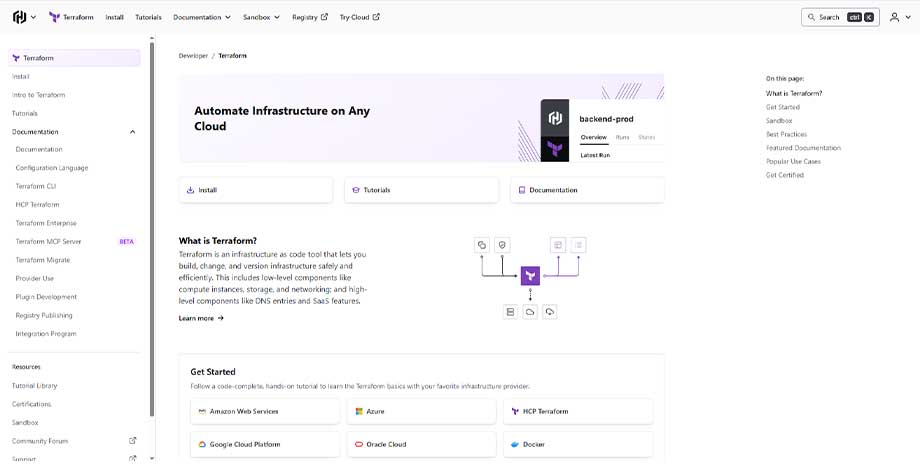

3. Terraform

Created by HashiCorp, Terraform is the industry standard for managing cloud infrastructure using declarative configuration files. It supports multiple cloud providers, including AWS, Microsoft Azure, and Google Cloud Platform, allowing teams to manage hybrid or multi-cloud environments from a single interface.

Terraform allows data engineers to define exactly what their infrastructure should look like. If a server goes down or a configuration is accidentally changed, Terraform can instantly identify the drift and restore the system to its defined state, promoting rigorous DevOps best practices.

- Key Features: Provider-agnostic architecture, precise execution plans, modular infrastructure design, and strong version control.

- Best For: Organizations utilizing multi-cloud environments that require repeatable, consistent infrastructure deployments.

- Real-World Application: Quickly provisioning identical staging and production cloud environments for a new data warehouse deployment with a single command.

4. Pulumi

Pulumi takes the concept of Infrastructure as Code a step further by allowing engineers to write infrastructure logic in familiar, general-purpose programming languages like TypeScript, Python, Go, and C#.

Unlike Terraform, which requires learning a domain-specific language (HCL), Pulumi leverages the existing skills of your software development team. This significantly lowers the barrier to entry for developers who want to contribute to infrastructure management while utilizing standard testing and debugging tools.

- Key Features: Support for standard programming languages, seamless cloud native integrations, and robust state management.

- Best For: Development-heavy teams that prefer using familiar coding languages to manage and test their cloud infrastructure.

- Real-World Application: Using Python to programmatically loop through a list of regions and deploy identical data storage buckets across all of them efficiently.

Workflow Orchestration Platforms

Orchestration tools are the central nervous system of your data infrastructure. They schedule, monitor, and manage the complex dependencies between various data tasks.

5. Prefect

Prefect is a modern, Python-native workflow orchestration tool designed to handle complex data pipelines. Built to overcome the limitations of older orchestration systems, Prefect treats code as workflows, offering an incredibly developer-friendly experience.

It removes the heavy boilerplate code traditionally required for task scheduling. With Prefect, data engineers can easily add observable, resilient orchestration to their existing Python code, benefiting from automatic retries, dynamic mapping, and a highly intuitive user interface.

- Key Features: Minimal boilerplate requirements, exceptional debugging capabilities, dynamic workflows, and great support for hybrid cloud models.

- Best For: Agile data teams that want modern, observable orchestration without sacrificing the flexibility of native Python code.

- Real-World Application: Orchestrating a machine learning pipeline that fetches daily API data, transforms it, and retries automatically if the external API temporarily times out.

6. Luigi

Originally developed and open-sourced by Spotify, Luigi is a robust, Python-based tool built specifically to manage complex pipelines of batch processing jobs.

Luigi excels at handling dependency resolution. If job C depends on job B, which depends on job A, Luigi ensures they execute in the exact correct order. It focuses heavily on workflow management, offering a visual dashboard to track the progress of long-running batch tasks across the system.

- Key Features: Built-in dependency management, strong failure recovery, straightforward visualization, and proven stability in massive production systems.

- Best For: Enterprises with highly complex, sequential batch processing pipelines that require strict dependency enforcement.

- Real-World Application: Managing an overnight data pipeline that aggregates millions of user listening events into daily summary tables before generating management reports.

Leading Data Warehouse Solutions

The data warehouse is the central repository where all structured data is stored, optimized, and prepared for high-speed analytical querying.

7. Snowflake

Snowflake is a revolutionary cloud-native data warehouse that completely decouples compute from storage. This architectural breakthrough means you can scale your storage capacity and your processing power independently, paying only for exactly what you consume.

Snowflake requires virtually no infrastructure management. It seamlessly handles structured and semi-structured data (like JSON), provides out-of-the-box data sharing capabilities, and delivers blistering query speeds even when hundreds of users are accessing the system simultaneously.

- Key Features: Zero-management architecture, independent scaling of compute and storage, native support for semi-structured data, and secure data sharing.

- Best For: Businesses of all sizes looking for a powerful, zero-maintenance analytics database with highly predictable scalability.

- Real-World Application: Consolidating sales, marketing, and operational data into a single, highly concurrent repository accessible to analysts globally without performance degradation.

8. PostgreSQL

PostgreSQL is an incredibly powerful, open-source object-relational database system with over three decades of active development. While traditionally known as a transactional database, its robust architecture makes it highly capable as a foundational data warehouse for smaller operations.

It is renowned for its absolute reliability, robust feature set, and strict compliance with SQL standards. Because it supports advanced data types like JSONB, it serves as an excellent, versatile bridge between traditional relational data and modern, document-style workloads.

- Key Features: Completely free and open-source, highly extensible architecture, robust transaction integrity, and advanced indexing capabilities.

- Best For: Startups and mid-market companies needing a highly reliable, cost-effective database that can handle both transactional and analytical workloads.

- Real-World Application: Serving as the central database for a growing e-commerce application while simultaneously supporting complex backend analytics queries.

Stop struggling with inefficient data systems holding back your growth. Partner with XCEEDBD.COM to deploy a scalable, enterprise-grade data warehouse. Click here to schedule your free technical consultation today!

Analytics Engineering & BI Tools

Analytics engineering bridges the gap between raw data and actionable business intelligence, allowing teams to transform and visualize data efficiently.

9. dbt (Data Build Tool)

dbt has transformed how analytics engineering operates by allowing data analysts and engineers to transform raw data directly inside the warehouse using simple, modular SQL statements.

Instead of building complex, fragile ETL pipelines outside the database, dbt leverages the immense processing power of the modern data warehouse to perform transformations (the “T” in ELT). It brings software engineering best practices like version control, testing, and documentation to data analysis.

- Key Features: Version-controlled transformations, automated data testing, modular SQL development, and auto-generated documentation.

- Best For: Modern data teams that want to empower SQL-savvy analysts to own the entire data transformation pipeline.

- Real-World Application: Taking raw customer event logs stored in Snowflake and using dbt to transform them into clean, tested, and reliable daily active user metrics.

10. Metabase

Metabase is a highly intuitive, open-source Business Intelligence (BI) tool designed to make data exploration accessible to absolutely everyone in a company, regardless of their technical background.

It allows non-technical users to ask questions of the data through a simple, visual interface, while still giving data engineers the ability to write raw SQL for highly complex queries. It is incredibly fast to set up and integrates beautifully with modern data warehouses.

- Key Features: Non-technical visual query builder, rapid dashboard creation, open-source flexibility, and automated reporting functionality.

- Best For: Startups and organizations that need a lightweight, user-friendly BI tool to democratize data access across all departments immediately.

- Real-World Application: Allowing a marketing manager to easily generate a visual dashboard showing weekly campaign ROI without needing to submit a ticket to the data team.

Batch Processing Powerhouses

Batch processing tools are designed to handle massive volumes of historical data, performing complex computations on large datasets at scheduled intervals.

11. Apache Spark

Apache Spark is a lightning-fast, general-purpose unified analytics engine specifically built for large-scale data processing. It significantly outperforms older processing frameworks by executing computations in-memory rather than reading and writing from disk.

Spark is incredibly versatile. It supports both massive batch processing tasks and micro-batch real-time streaming. With rich APIs available in Scala, Python, Java, and R, it has become the undeniable standard for big data processing and complex machine learning workflows.

- Key Features: In-memory computation speed, unified batch and stream processing capabilities, extensive machine learning libraries (MLlib), and high fault tolerance.

- Best For: Enterprises dealing with massive datasets that require extremely fast data processing and advanced machine learning model training.

- Real-World Application: Processing terabytes of historical website clickstream data overnight to train a highly personalized product recommendation engine for an e-commerce platform.

12. Apache Hadoop

Hadoop is the legendary pioneer in distributed data processing. While newer technologies have emerged, Hadoop remains a foundational pillar in many mature enterprise data ecosystems. It excels at distributing massive datasets across clusters of inexpensive commodity hardware.

The core of Hadoop is its Distributed File System (HDFS), which provides incredibly scalable and reliable data storage. While it may not match the speed of in-memory processors, it offers unmatched resilience and cost-efficiency for storing and processing colossal amounts of unstructured data.

- Key Features: Highly scalable HDFS storage, MapReduce processing framework, massive fault tolerance, and deep enterprise ecosystem integration.

- Best For: Very large enterprises with mature, legacy data ecosystems that need to store and process virtually limitless amounts of raw data economically.

- Real-World Application: Storing decades worth of raw, unstructured financial compliance logs securely and running intensive, long-running batch audits across the entire historical dataset.

Real-Time Streaming Platforms

When data loses its value seconds after it is generated, real-time streaming tools are required to capture, process, and analyze information instantly.

13. Apache Kafka

Apache Kafka is an open-source, distributed event streaming platform built to handle trillions of events a day. Initially developed by LinkedIn, Kafka is the central nervous system for real-time data architectures across the globe.

It operates as a highly durable, fault-tolerant message broker. It allows thousands of producer applications to publish real-time data streams and thousands of consumer applications to subscribe to and read those streams simultaneously, all with exceptionally low latency.

- Key Features: Unprecedented high throughput, durable message storage, massive horizontal scalability, and seamless microservices integration.

- Best For: Organizations of any size that need to build resilient, real-time data pipelines and heavily decoupled microservices architectures.

- Real-World Application: Capturing real-time credit card swipe data from global point-of-sale systems and streaming it instantly to fraud detection algorithms.

14. Apache Flink

Apache Flink is a powerful framework and distributed processing engine designed for highly sophisticated stateful computations over unbounded data streams.

While Kafka is excellent at moving the data, Flink is exceptional at processing it the moment it arrives. Flink provides ultra-low latency processing and guarantees exactly-once processing semantics, making it ideal for event-driven applications and complex real-time analytics where absolute accuracy is critical.

- Key Features: True event-at-a-time stream processing, robust state management, exactly-once processing guarantees, and seamless batch processing capabilities.

- Best For: Enterprises requiring hyper-accurate, sub-second real-time analytics and complex event processing systems.

- Real-World Application: Monitoring real-time IoT sensor data from manufacturing equipment to instantly trigger predictive maintenance alerts the millisecond an anomaly is detected.

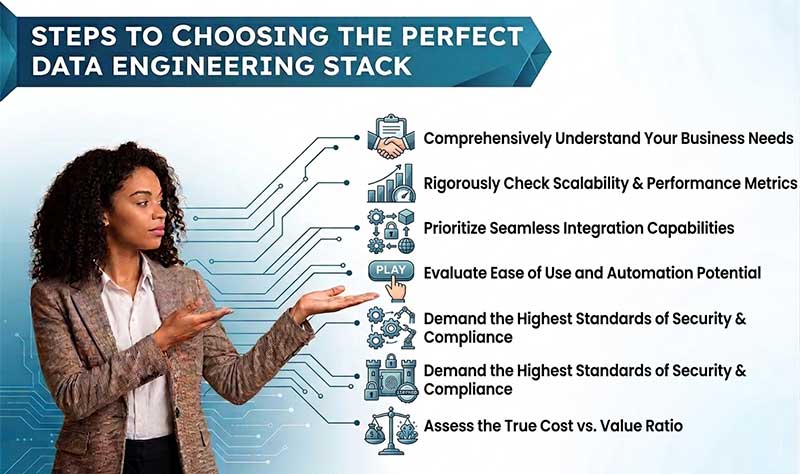

6 Steps to Choosing the Perfect Data Engineering Stack

Selecting the right data engineering tools is a high-stakes decision. To avoid costly migrations and operational friction, follow this strategic evaluation process:

1. Comprehensively Understand Your Business Needs Before evaluating any software, you must define your exact use case. Are you building a system to generate end-of-month financial reports, or do you need to detect fraudulent transactions in real-time? Batch processing tools are sufficient for the former, while real-time streaming tools are mandatory for the latter.

2. Rigorously Check Scalability & Performance Metrics Your data infrastructure must be future-proof. A tool that performs flawlessly with a gigabyte of data might completely fail when subjected to terabytes. Evaluate how the software handles resource allocation. Look for cloud-native solutions that offer independent scaling of compute and storage to ensure optimal performance without bloated costs.

3. Prioritize Seamless Integration Capabilities No data tool operates in a vacuum. The software you choose must integrate frictionlessly with your existing ecosystem. Verify that the new tool has native connectors or robust APIs that communicate easily with your current cloud provider, your business intelligence dashboards, and your core operational applications.

4. Evaluate Ease of Use and Automation Potential Complexity is the enemy of execution. If a tool requires a massive team of specialized engineers just to maintain basic functionality, it will drain your resources. Look for platforms that prioritize intuitive interfaces, declarative configurations, and extensive automation features. The goal is to accelerate your data processes, not bog your team down in maintenance tasks.

5. Demand the Highest Standards of Security & Compliance Data breaches are catastrophic. Any tool you integrate into your pipeline must feature enterprise-grade security protocols. Ensure the software provides granular role-based access control (RBAC), end-to-end data encryption, and verifiable adherence to strict regulatory frameworks such as GDPR, HIPAA, and CCPA.

6. Assess the True Cost vs. Value Ratio The initial licensing fee or server cost is only a fraction of the total cost of ownership. You must factor in the engineering hours required for implementation, training, and ongoing maintenance. An expensive, fully managed cloud tool might actually save your company thousands of dollars by freeing up your engineers to work on high-value analytics rather than managing server infrastructure.

Conclusion

Choosing the right data engineering tools is the foundational step toward building a data-driven organization. Whether you are leaning on Docker for containerization, Prefect for orchestration, or Snowflake for massive data warehousing, the goal remains the same: transforming raw, chaotic data into a clean, accessible, and highly valuable corporate asset. By carefully evaluating your business needs and prioritizing scalable, integrative solutions, you can build an infrastructure that drives unparalleled growth.

Frequently Asked Questions (FAQs)

1. What are the most popular data engineering tools used today? The most widely adopted tools span various categories. Snowflake and PostgreSQL are incredibly popular for data warehousing, Apache Kafka dominates real-time event streaming, Apache Spark is the standard for massive batch processing, and dbt has become the go-to solution for modern analytics engineering and SQL transformations.

2. Which specific tools do data engineers use on a daily basis? A data engineer’s daily toolkit typically includes a mix of programming languages (like Python or SQL), workflow orchestration platforms (such as Prefect or Airflow), infrastructure as code software (like Terraform), and containerization platforms (like Docker) to reliably build, test, and deploy code.

3. What is the core difference between data engineering and data analytics tools? Data engineering tools (like Kafka, Hadoop, and Kubernetes) are designed to build the “plumbing”—extracting, processing, and securely storing raw data. Data analytics tools (like Metabase or Tableau) connect to that processed data, allowing users to create visual dashboards, identify trends, and extract business insights.

4. How do I choose the right data stack for my lean startup? Startups should prioritize fully managed, cloud-native tools that require minimal infrastructure maintenance and offer pay-as-you-go pricing models. A common modern startup stack includes a managed ETL connector, Snowflake for cloud data warehousing, dbt for internal data transformations, and Metabase for lightweight, accessible reporting.

5. What are the best open-source data engineering tools available? The open-source community is highly active in data engineering. Leading open-source tools include Apache Spark for processing, Apache Kafka for real-time streaming, PostgreSQL for database management, and Metabase for business intelligence visualization.

6. Do I need real-time data streaming tools for my business? It entirely depends on your specific use case. If your business relies on instantaneous actions—such as live fraud detection, immediate inventory updates, or real-time ride-sharing algorithms—then streaming tools like Kafka are essential. If you primarily use data for weekly reporting, standard batch processing is sufficient and much more cost-effective.

7. Why is Infrastructure as Code (IaC) important for data engineering? IaC tools like Terraform and Pulumi allow data teams to manage their server and cloud infrastructure using version-controlled code. This eliminates manual configuration errors, allows for rapid disaster recovery, and ensures that development, staging, and production environments are perfectly identical.

8. Can XCEEDBD.COM help me migrate from legacy data systems? Yes. Migrating from outdated legacy systems to a modern data stack requires careful planning to prevent data loss. The technical experts at XCEEDBD.COM specialize in securely auditing, designing, and executing complex data infrastructure migrations tailored specifically to your business goals.